Software Safety Principles Conclusions and References is the sixth and final blog post on Principles of Software Safety Assurance. In them, we look at the 4+1 principles that underlie all software safety standards. (The previous post in the series is here.)

Read on to Benefit From…

The conclusions of this paper are brief and readable, but very valuable. It’s important for us – as professionals and team players – to be able to express these things to managers and other stakeholders clearly. Talking to non-specialists is something that most technical people could do better.

The references include links to the standards covered by the paper. Unsurprisingly, these are some of the most popular and widely used processes in software engineering. The other links take us to the key case studies that support the conclusions.

Content

We outline common software safety assurance principles that are evident in software safety standards and best practices. You can think of these guidelines as the unchanging foundation of any software safety argument because they hold true across projects and domains.

The principles serve as a guide for cross-sector certification and aid in maintaining comprehension of the “big picture” of software safety issues while evaluating and negotiating the specifics of individual standards.

Conclusion

These six blog posts have presented the 4+1 model of foundational principles of software safety assurance. The principles strongly connect to elements of current software safety assurance standards and they act as a common benchmark against which standards can be measured.

Through the examples provided, it’s also clear that, although these concepts can be stated clearly, they haven’t always been put into practice. There may still be difficulties with their application by current standards. Particularly, there is still a great deal of research and discussion going on about the management of confidence with respect to software safety assurance (Principle 4+1).

[My own, informal, observations agree with this last point. Some standards apply Principle 4+1 more rigorously, but as a result, they are more expensive. As a result, they are less popular and less used.]

Standards and References

[1] RTCA/EUROCAE, Software Considerations in Airborne Systems and Equipment Certification, DO-178C/ED-12C, 2011.

[2] CENELEC, EN-50128:2011 – Railway applications – Communication, signaling and processing systems – Software for railway control and protection systems, 2011.

[3] ISO-26262 Road vehicles – Functional safety, FDIS, International Organization for Standardization (ISO), 2011

[4] IEC-61508 – Functional Safety of Electrical / Electronic / Programmable Electronic Safety-Related Systems. International Electrotechnical Commission (IEC), 1998

[5] FDA, Examples of Reported Infusion Pump Problems, Accessed on 27 September 2012,

[6] FDA, FDA Issues Statement on Baxter’s Recall of Colleague Infusion Pumps, Accessed on 27 September 2012, http://www.fda.gov/NewsEvents/Newsroom/PressAnnouncements/ucm210664.htm

[7] FDA, Total Product Life Cycle: Infusion Pump – Premarket Notification 510(k) Submissions, Draft Guidance, April 23, 2010.

[8] “Report on the Accident to Airbus A320-211 Aircraft in Warsaw on 14 September 1993”, Main Commission Aircraft Accident Investigation Warsaw, March 1994, http://www.rvs.unibielefeld.de/publications/Incidents/DOCS/ComAndRep/Warsaw/warsaw-report.html Accessed on 1st October 2012.

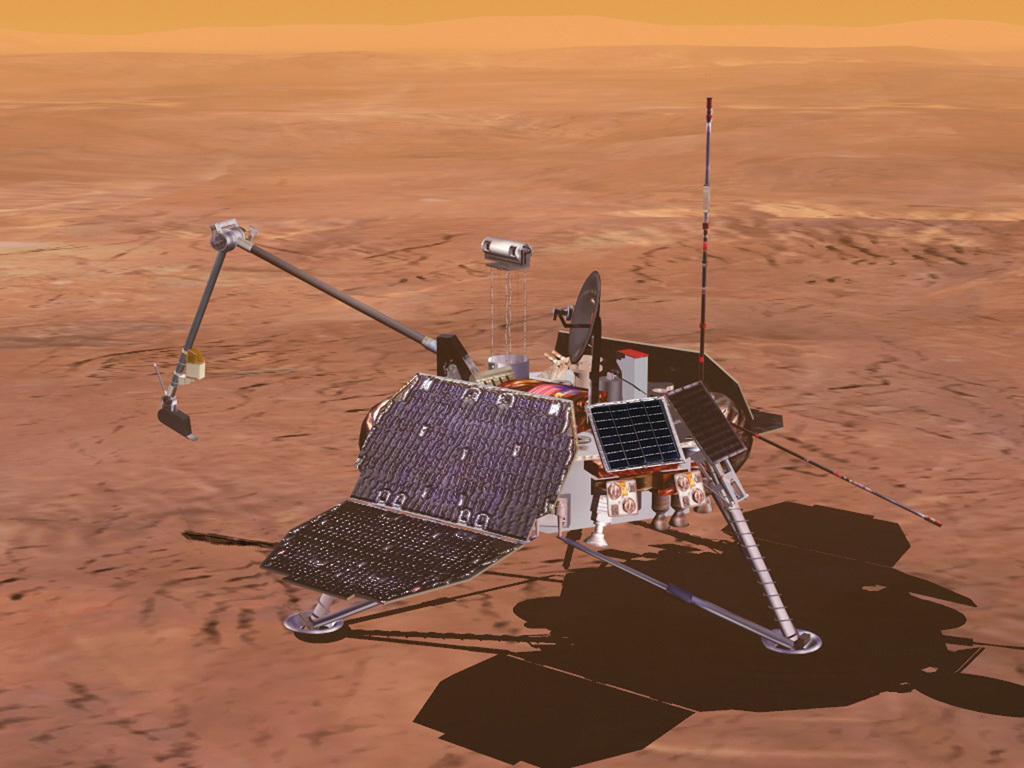

[9] JPL Special Review Board, “Report on the Loss of the Mars Polar Lander and Deep Space 2 Missions”, Jet Propulsion Laboratory”, March 2000.

[10] Australian Transport Safety Bureau. In-Flight Upset Event 240Km North-West of Perth, WA, Boeing Company 777-2000, 9M-MRG. Aviation Occurrence Report 200503722, 2007.

[11] H. Wolpe, General Accounting Office Report on Patriot Missile Software Problem, February 4, 1992, Accessed on 1st October 2012, Available at: http://www.fas.org/spp/starwars/gao/im92026.htm

[12] Y.C. Yeh, Triple-Triple Redundant 777 Primary Flight Computer, IEEE Aerospace Applications Conference pg 293-307, 1996.

[13] D.M. Hunns and N. Wainwright, Software-based protection for Sizewell B: the regulator’s perspective. Nuclear Engineering International, September 1991.

[14] R.D. Hawkins, T.P. Kelly, A Framework for Determining the Sufficiency of Software Safety Assurance. IET System Safety Conference, 2012.

[15] SAE. ARP 4754 – Guidelines for Development of Civil Aircraft and Systems. 1996.

Software Safety Principles: End of the Series

This blog post series was derived from ‘The Principles of Software Safety Assurance’, by RD Hawkins, I Habli & TP Kelly, University of York. The original paper is available for free here. I was privileged to be taught safety engineering by Tim Kelly, and others, at the University of York. I am pleased to share their valuable work in a more accessible format.

Meet the Author

My name’s Simon Di Nucci. I’m a practicing system safety engineer, and I have been, for the last 25 years; I’ve worked in all kinds of domains, aircraft, ships, submarines, sensors, and command and control systems, and some work on rail air traffic management systems, and lots of software safety. So, I’ve done a lot of different things!

Principles of Software Safety Training

Learn more about this subject in my course ‘Principles of Safe Software’ here.

My course on Udemy, ‘Principles of Software Safety Standards’ is a cut-down version of the full Principles Course. Nevertheless, it still scores 4.42 out of 5.00 and attracts comments like:

- “It gives me an idea of standards as to how they are developed and the downward pyramid model of it.” 4* Niveditha V.

- “This was really good course for starting the software safety standareds, comparing and reviewing strengths and weakness of them. Loved the how he try to fit each standared with4+1 principles. Highly recommend to anyone that want get into software safety.” 4.5* Amila R.

- “The information provides a good overview. Perfect for someone like me who has worked with the standards but did not necessarily understand how the framework works.” 5* Mahesh Koonath V.

- “Really good overview of key software standards and their strengths and weaknesses against the 4+1 Safety Principles.” 4.5* Ann H.