Software Safety Principles 2 and 3 is the second in a new series of blog posts on Principles of Software Safety Assurance. In it, we look at the 4+1 principles that underlie all software safety standards. (The previous blog post is here.)

We outline common software safety assurance principles that are evident in software safety standards and best practices. You can think of these guidelines as the unchanging foundation of any software safety argument because they hold true across projects and domains.

The principles serve as a guide for cross-sector certification and aid in maintaining comprehension of the “big picture” of software safety issues while evaluating and negotiating the specifics of individual standards.

Principle 2: Requirement Decomposition

The second software safety principle is:

Principle 2: The intent of the software safety requirements shall be maintained throughout requirements decomposition.

‘The Principles of Software Safety Assurance’, RD Hawkins, I Habli & TP Kelly, University of York.

The requirements and design are gradually broken down as the software development lifecycle moves forwards, leading to the creation of a more intricate software design. The term “derived software requirements” refers to the criteria that were derived for the more intricate software design. The intent of those criteria must be upheld as the software safety requirements are broken down once they have been established as comprehensive and accurate at the highest (most abstract) level of design.

An example of the failure of requirements decomposition is the crash of Lufthansa Flight 2904 at Warsaw on 14 September 1993.

In essence, the issue is one of ongoing requirements validation. How do we show that the requirements expressed at one level of design abstraction are equal to those defined at a more abstract level? This difficulty arises constantly during the software development process.

It is insufficient to only consider requirements fulfillment. The software safety requirements had been met in the Flight 2904 example. However, they did not match the intent of the high-level safety requirements in the real world.

Human factors difficulties (a warning may be presented to a pilot as necessary, but that warning may not be noticed on the busy cockpit displays) are another consideration that may make the applicability of the decomposition more challenging.

Ensuring that all necessary details are included in the first high-level need is one possible theoretical solution to this issue. However, it would be difficult to accomplish this in real life. It is inevitable that design choices requiring more specific criteria will be made later in the software development lifecycle. It is not possible to accurately know this detail until that design choice has been made.

The decomposition of safety criteria must always be handled if the program is to be regarded as safe to use.

Requirements Satisfaction

The third software safety assurance principle is:

Principle 3: Software safety requirements shall be satisfied.

‘The Principles of Software Safety Assurance’, RD Hawkins, I Habli & TP Kelly, University of York.

It must be confirmed that a set of “valid” software safety requirements has been met after they have been defined. This set may be assigned software safety requirements (Principle 1), or refined or derived software safety requirements (Principle 2). The fact that these standards are precise, well-defined, and actually verifiable is a crucial need for their satisfaction.

The sorts of verification techniques used to show that the software safety requirements have been met will vary on the degree of safety criticality, the stage of development, and the technology being employed. Therefore, attempting to specify certain verification methodologies that ought to be employed for the development of verification findings is neither practical nor wise.

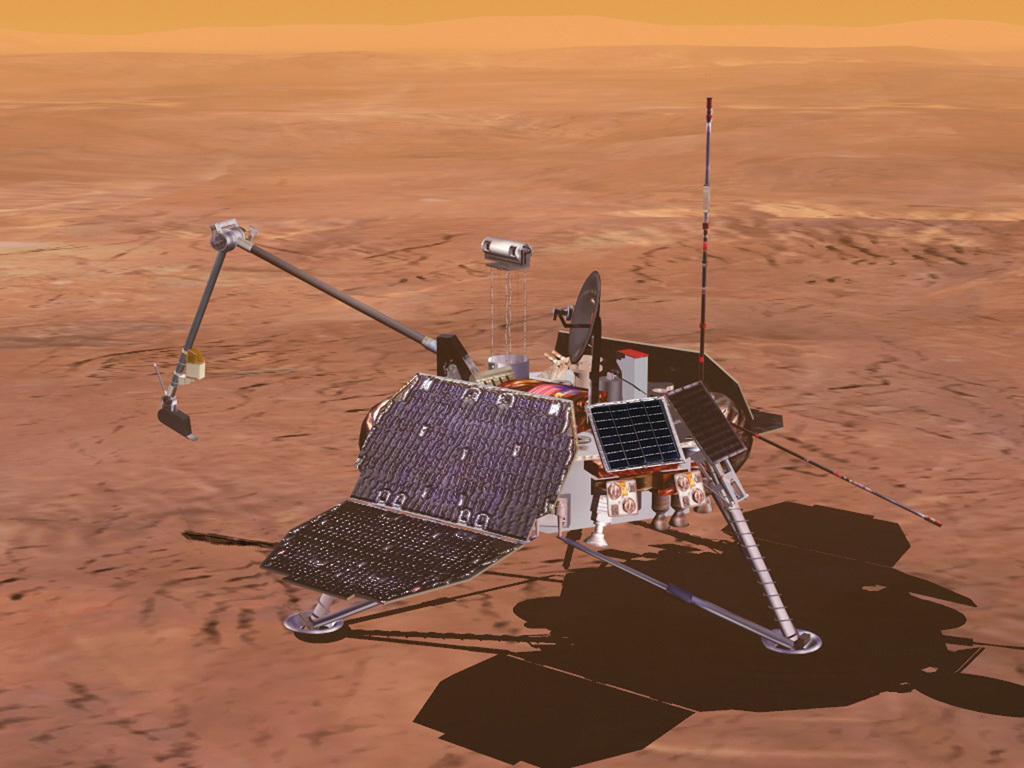

Mars Polar Lander was an ambitious mission to set a spacecraft down near the edge of Mars’ south polar cap and dig for water ice. The mission was lost on arrival on December 3, 1999.

Given the complexity and safety-critical nature of many software-based systems, it is obvious that using just one type of software verification is insufficient. As a result, a combination of verification techniques is frequently required to produce the verification evidence. Testing and expert review are frequently used to produce primary or secondary verification evidence. However, formal verification is increasingly emphasized because it may more reliably satisfy the software safety standards.

The main obstacle to proving that the software safety standards have been met is the evidence’s inherent limitations as a result of the methods described above. The characteristics of the problem space are the root of the difficulties.

Given the complexity of software systems, especially those used to achieve autonomous capabilities, there are challenges with completeness for both testing and analysis methodologies. The underlying logic of the software can be verified using formal methods, but there are still significant drawbacks. Namely, it is difficult to provide assurance of model validity. Also, formal methods do not deal with the crucial problem of hardware integration.

Clearly, the capacity to meet the stated software safety requirements is a prerequisite for ensuring the safety of software systems.

Software Safety Principles 2 & 3: End of Part 2 (of 6)

This blog post is derived from ‘The Principles of Software Safety Assurance’, RD Hawkins, I Habli & TP Kelly, University of York. The original paper is available for free here. I was privileged to be taught safety engineering by Tim Kelly, and others, at the University of York. I am pleased to share their valuable work in a more accessible format.

Meet the Author

My name’s Simon Di Nucci. I’m a practicing system safety engineer, and I have been, for the last 25 years; I’ve worked in all kinds of domains, aircraft, ships, submarines, sensors, and command and control systems, and some work on rail air traffic management systems, and lots of software safety. So, I’ve done a lot of different things!

Learn more about this subject in my course ‘Principles of Safe Software’ here. The next post in the series is here.